[Notes from Pinal]: SSIS is very well explored subject, however, there are so many interesting elements when we read, we learn something new. A similar concept has been Using the SSIS Term Extraction for Data Exploration.

Linchpin People are database coaches and wellness experts for a data driven world. In this 46th episode of the Notes from the Fields series database expert Tim Mitchell (partner at Linchpin People) shares very interesting conversation related to how to understand SSIS Term Extraction for Data Exploration.

Linchpin People are database coaches and wellness experts for a data driven world. In this 46th episode of the Notes from the Fields series database expert Tim Mitchell (partner at Linchpin People) shares very interesting conversation related to how to understand SSIS Term Extraction for Data Exploration.

Data exploration is an essential piece of any new ETL (extraction-transformation-load) process. Knowing the structure, type, and even the semantics of data sources can help the ETL developer or architect to make better decisions on data type and length settings, transformation logic, and error handling procedures. Additionally, there are situations in which the exploration of the data is the principal purpose of the ETL, such as text mining and other pattern analysis. In most scenarios, SQL Server Integration Services is used as a traditional ETL tool and not necessarily for data exploration or text mining. However, there are some tools built into SSIS that are ideally suited for exploring source feed to expose patterns in the data. In this brief post, I’ll cover one such tool: the term extraction component.

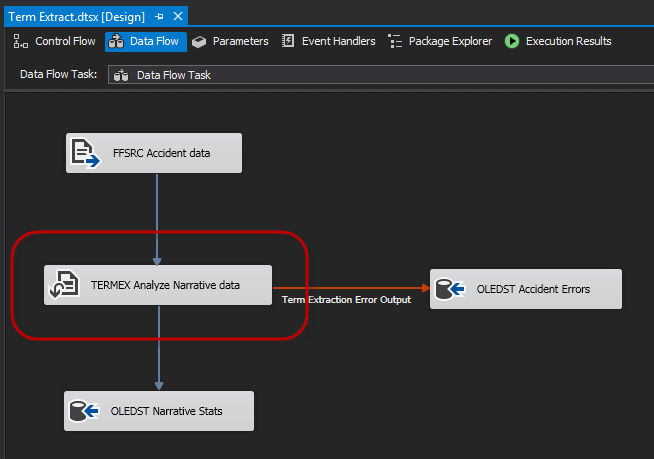

The term extraction component is a data flow transformation that will aggregate the frequency of words found in a specified column supplied by an upstream data source. This component expects a Unicode text or text stream field as an input, and calculates as an output the frequency of the nouns and/or noun phrases in the specified source column. As shown below on the data flow surface, the term extraction component (circled) is always used as a transformation, accepting exactly one input and expecting either one or two outputs (the second being an optional error output).

Configuring the term extraction component can be a relatively simple exercise. As mentioned, you’ll need to specify the upstream column that will be used as an input, and this column must be Unicode text (DT_WSTR) or stream (DT_NTEXT).

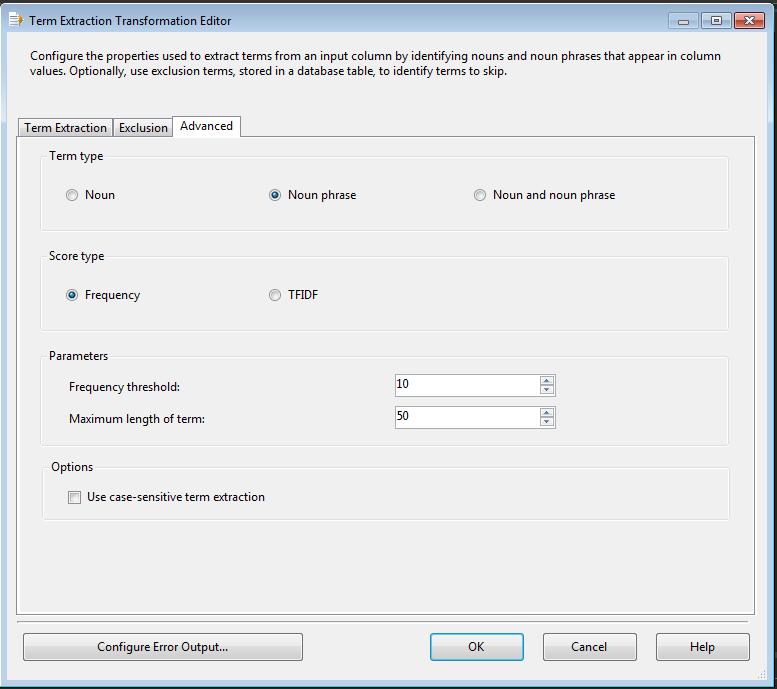

Purposefully skipping over the Exclusion tab for just a moment, we’ll review the Advanced tab. This pane exposes several of the key configuration elements of the term extraction component. In particular, we can use this page to set the output for noun and/or noun phrase, set the score type to frequency (raw count) or TFIDF (a calculation based both on the frequency of the term and the ratio of the analyzed terms to the entire document), and set the minimum score threshold and maximum term length. We can also set the case-sensitive flag, in case our analytics need to be case sensitive.

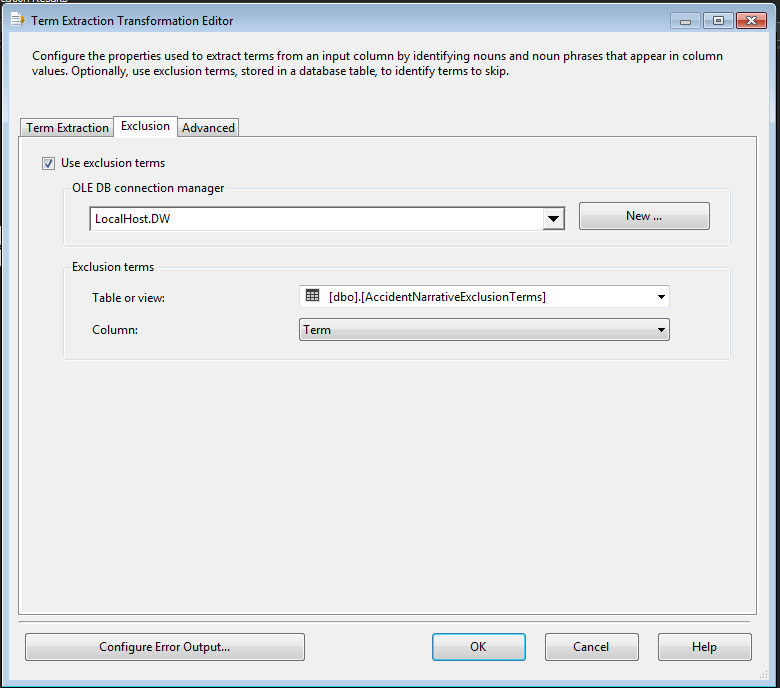

Additionally, the exclusion list (on the Exclusion tab) can help to refine your calculation results. This feature allows the ETL developer to specify a known list of terms that will be excluded from the output. This is useful in cases where industry or common language terms might occur so frequently that including them in the output simply dilutes the rest of the data.

Although the term extraction component does not have a lot of moving parts, it does provide a quick way to perform data exploration and simple text analysis.

Conclusion

In this post, I have briefly demonstrated the attributes of the term extraction transformation, an SSIS data flow tool that can be used for data exploration. This tool is a quick and easy way to perform triage analysis and mining of raw text data.

If you want me to take a look at your server and its settings, or if your server is facing any issue we can Fix Your SQL Server.

Reference: Pinal Dave (https://blog.sqlauthority.com)