Last week, I had published details regarding SQL SERVER – Plan Caching in SQL Server 2008 by Greg Low on this blog. Similar to any other white paper, I have read this paper very carefully and enjoyed reading it. One particular topic in the white paper that caught my attention is definition of schema change. I was well aware of this definition, but I have often found that users are not familiar with what exactly does a schema change mean.

Many people assume that a change in the table structure is schema change. In fact, creating or dropping index on any table also qualifies as schema change.

As per the white paper, “Schema change” is defined as follows:

- Adding or dropping columns to a table or view.

- Adding or dropping constraints, defaults, or rules to/from a table.

- Adding an index to a table or an indexed view.

- Dropping an index defined on a table or an indexed view (only if the index is used by the query plan in question).

- Dropping a statistic (not creating or updating!) defined on a table will cause a correctness-related recompilation of any query plans that use that table. Such recompilations occur at that instant when the query plan in question begins execution. Updating a statistic (both manual and auto-update) will cause an optimality-related (data related) recompilation of any query plans that uses this statistic.

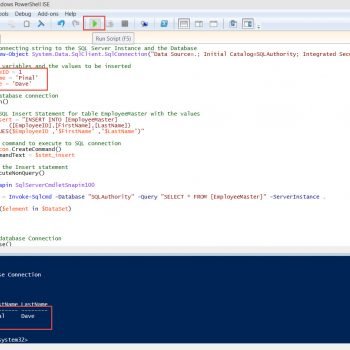

As per the above definition, I decided to experiment with schema change. In white paper, it was mentioned that schema change will recompile the execution batch. I decided to test this out. I created a small script (given below) and decided to test it.

As per the script, I created a large table and stored the procedure that was accessing that table. I created another object that was an index to the table; when SP was ran with index on table, it showed a plan different from that without the index on table. It was evident that the batch was recompiled when the index on the table was modified.

The script is available here to download.

Without Index execution plan:

With Index execution plan:

It is clear from the execution plan that it is different, and this proves the point that schema change recompiles the execution plan.

There is one more script provided in the white paper, which gets data from DMV related to cached plan. When I ran the following SP, it did not give me very interesting results.

SELECT cp.objtype AS PlanType, OBJECT_NAME(st.objectid,st.dbid) AS ObjectName, cp.refcounts AS ReferenceCounts, cp.usecounts AS UseCounts, st.TEXT AS SQLBatch, qp.query_plan AS QueryPlan FROM sys.dm_exec_cached_plans AS cp CROSS APPLY sys.dm_exec_query_plan(cp.plan_handle) AS qp CROSS APPLY sys.dm_exec_sql_text(cp.plan_handle) AS st;

When I ran the above script, I found that the UseCount column did not reset when QueryPlan is changed. This was very surprising as I was expecting UseCount to reset on changing the query plan. I sent an email to Dr. Greg Low, who is author of this white paper. He was very kind to respond back within minutes with a proper answer, which is as follows:

You’ll notice in Profiler when you run the sproc after creating the index that it does in fact cause a recompile. However, in SQL Server 2008 (and 2005), these are statement level recompiles not proc level recompiles. I imagine that’s why they’re not reflected in the UseCount values ie: the plan itself isn’t recompiled and that’s what the counts are based on.

Well, this simple explanation clarified my doubts. Let me know what you think, and I strongly suggest you all to read the white paper written by Dr. Greg Low.

Reference : Pinal Dave (https://blog.sqlauthority.com)

3 Comments. Leave new

Very interesting observation. Thankx for sharing.

-Harshal.

Nice Observation..Thanks

very nice stuff…