Let us learn today in this quick guide Execution Plans and Indexing Strategies. Your comments are welcome

SQL SERVER and AI – Setting Up an MCP Server for Natural Language Tuning

Let us learn more about SQL SERVER and AI – Setting Up an MCP Server for Natural Language Tuning. Detailed Instructions.

SQL SERVER Performance – JSON vs XML

What started as a simple recommendation turned into a comprehensive benchmarking exercise. Let us see blog post about JSON vs XML.

SQL SERVER Performance: When MAXDOP = 1 Slowed Down the Entire Business

Performance was consistently poor, especially during periods of high activity. Let us discuss about SQL SERVER Performance Case Study: When MAXDOP = 1 Slowed Down the Entire Business.

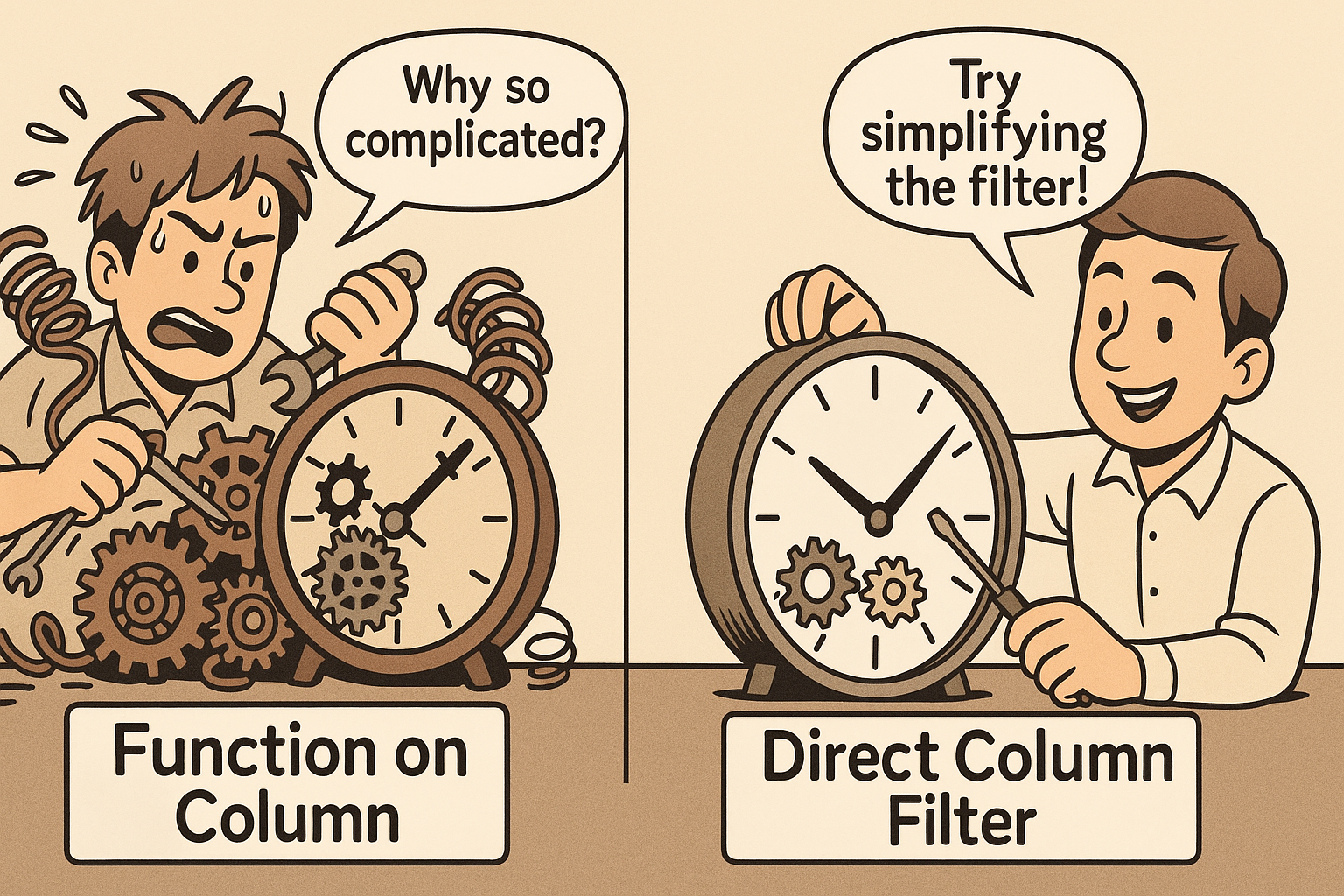

SQL SERVER – Catching Non-SARGable Queries in Action

If you ever wondered why a query that looks harmless is performing poorly despite having indexes in place, the answer could non-SARGable queries.

SQL SERVER Performance Tuning: Catching Key Lookup in Action

In this blog post, we’ll explore what a Key Lookup is, how to spot it, and most importantly, how to eliminate it using a covering index.

SQL SERVER – Understanding WITH RECOMPILE in Stored Procedures

The culprit? Parameter sniffing. The solution? You guessed it: WITH RECOMPILE. Comprehensive Database Performance Health Check