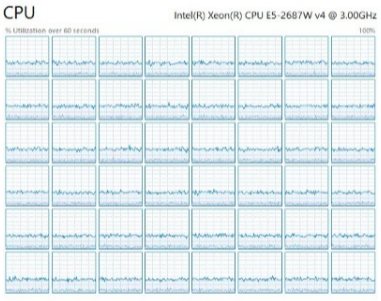

With the new Intel Skylake CPUs coming out recently it seemed like a good time to discuss CPU resources and how to know if they’re running optimally. After all, there’s little sense in upgrading your server if your current one is already data starved. Let us learn about how to balance your SQL Server facing data starvation.

What is Data Starvation?

Data Starvation is when CPU cores have unused time slices due to waiting for data. Databases need data and if time slices are unused, then it makes queries last longer, wastes CPU licenses, etc. This is typically caused by an imbalance between CPU and I/O subsystem performance.

Skylake offers up to 26 physical cores per CPU. With Hyperthreading, that’s 208 logical cores in a 4-CPU server (common for analytics). That’s a lot of parallel streams to feed.

Back to Data Starvation. There are two types:

- Bandwidth: Not supplying enough bulk data to feed the processors grinding through reports in parallel.

- Latency: Not returning I/O requests fast enough to keep the CPU cores fully active.

Bandwidth:

SQL Server has made I/O engine improvements over the versions (like larger read-ahead batch sizes, etc). Multiply these by expanding the numbers of cores per server and that means vast amounts of data can be processed.

Ok, so what can a SQL Server do?

Recently I borrowed a SAN array from my friends at Vexata and ran some TPC-H like workloads. I was able to get a single 2-CPU SQL Server running at 25GB/s of reads plus 11GB/s of writes for a total of 36GB/s. That’s a ton of data crunching in one small server.

To create a ballpark method for sizing servers I divided my peak bandwidths by the number of cores on my two test systems. This gives the per-core bandwidths the SQL Server is capable of.

| Test System | Read GB/s | Write GB/s | Per Core MB/s (r/w) |

| Skylake 72-cores 2.7GHz (32Gb FC) | 25 | 11 | (350 / 150) |

| Broadwell 48-cores 2.4GHz (16Gb FC) | 12 | 5 | (250 / 100) |

Latency:

When SQL Server attempts a transaction the first step is to make I/O reads for the index and then data pages. Transactions can’t start until the data has been loaded. For larger tables, there can be 10 or more index levels to hop before getting to the data page. And, this is done sequentially. This is why common OLTP workload testing profiles are 70/30 r/w as the most common I/O is the initial index traversing and data reads. If applications seem sluggish and the cores aren’t over 90% then I/Os could be pending.

In part 2 we’ll cover how to use SQL Server tools to determine if data starvation is your bottleneck.

Call to Action: Meanwhile, check out Vexata solutions for SQL Server.

Reference: Pinal Dave (https://blog.sqlauthority.com)

2 Comments. Leave new

Shouldn’t the caption for the graphs be interchanged ? High CPU utilization (=> not waiting for pending I/O to complete) is the lower set of plots but the caption says “CPU Starved”

Does this go against MAXDOP standards? Seems like maxdop = 0?