SQL Server backups are essential for any business that store their data in SQL Server. The following is a list of best practices you should be following if you are the person in charge with maintaining your organization’s databases. Let us see some of the SQL Server Backup Tips.

1. Backups should not be stored in the same physical location as database files

This very simple rule will save your business from a lot of difficult situations in the event that a physical drive becomes faulty.

In case something like this occurs, you sahould have the possibility to use other drives or to remote to a different location that has stored a backup of your database so that you are able to restore your database from that location.

2. Backup schedules are set up

Another good safety precaution is for your backups to always be accompanied by proper schedules which have been established to meet the application needs and your particular business requirements.

Creating backup schedules is very important because as time passes and your backups get older, the risk of data loss becomes higher, unless you are protected and you have a way to reestablish all the data up to the point of failure.

Backup schedules will provide you a consistent and constant history of your data which will always be useful, not only in disaster situations.

3. Test the restore procedure of your backup on a test server

You should always try to restoring your backups on a test server and make sure that you can recover all of the data with all the options you need to use during a restore on the main server.

Just because a backup process has finished successfully, this will not guarantee that the backup can also be restored. You might not be aware that your backup was not created correctly, and when trying to restore it on the main server, the process might fail due to media corruption or other factors.

4. Make use of all available verification options when doing backup

Another good practice is to use all options provided in the process of backing up your database in order to make sure that the end result is a transactionally consistent backup.

If you are using T-SQL scripts to back up your database, then make sure that when using the BACKUP command you also add the CHECKSUM parameter, in the WITH clause, which will imply that each page will be passed through a checksum operation to ensure the consistency of your backup on the backup media.

BACKUP DATABASE MyDatabase TO DISK = 'Z:\MyBackups\MyDatabase.bak' WITH CHECKSUM;

If you prefer doing your backups manually through a visual interface like the one available in SQL Server Management Studio (SSMS), make sure to check the first two checkboxes in the Reliability section, on the Options page.

These two options, Verify backup when finished and Perform checksum before writing to media will add a level of verification to your backup process which will ensure your backups are consistent.

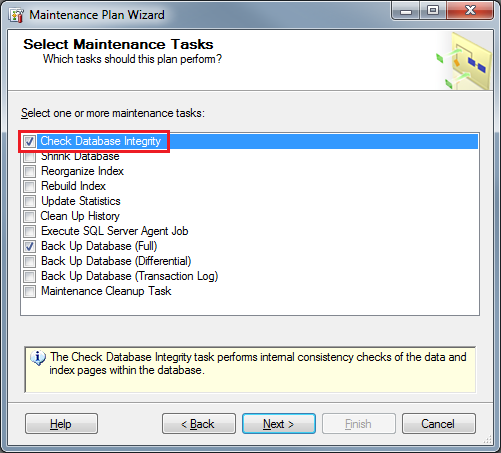

If you choose a different wizard to do your backups, like the one available through SQL Server Maintenance Plans, make sure to tick the box that corresponds to Check Database Integrity option.

A different piece of software which I prefer to use to use for this operation, mainly because of its simplicity in use, is SQLBackupAndFTP. It has all the most important options in an easy to access and intuitive interface.

5. Do a FULL backup daily

Based on the needs of your organization you should choose a recovery model that will allow you to protect your organization’s against data loss. If your organization can afford to lose 15, 30, 60 minutes worth of data, choosing a simple recovery model is the option you should go for.

In this case, having a FULL database backup is the best method of protection against data loss in any data protection plan and in conclusion, most cases would require it to be performed daily, despite the overhead added by the time required for such an operation.

If your organization’s databases are small with easily recoverable data, then a FULL backup should be the way to go.

As I am already using SQLBackupAndFTP for my backup process, I find it very convenient that it has, by default, set its backup schedule to do a FULL back up every 24 hours.

This is very helpful and this way the risk that a detail that is most important will be eliminated.

The biggest advantage of this best practice is that the recovery is easier as the backup is just one file and no database log is needed, while the downside for this type of backup is that data that can be recovered is only up to the time of the backup and depending on the size of your database, it can take up a lot of disk space.

6. Do differential backups more frequently

If you consider doing FULL backups are too expensive for your organization, from a resource availability and data loss point of view, there is the option of doing differential backups of your databases, which can and should be done more frequently than FULL backups.

In terms of advantages, differential backups will take less time to complete and also less disk space as they contain just the data from the last FULL backup operation. No database log is needed in this case either and the restores are more precise in terms of data.

The disadvantages of this would be that still, the data that can be recovered is only up to the time of the restore and the restoring process is a bit more complicated as there are two files involved.

7. Do transaction log backups even more frequently

The following most important step in doing backups, after FULL and differential backups, would be to back up the transaction log. As the transaction log contains all the recent activity in the database, it could be used to make a restore of the database to a specific point in time, which is its biggest advantage.

Doing a transaction log backup also truncates the log, which keeps it from becoming full. Similar to database backups, transaction log backups can be done while the system is active.

If your organization has a high activity with some of its databases, doing a transaction log backup every 10 minutes is recommended, while other databases which are less active might have their transaction logs backed up every 30 minutes or 60 minutes.

The negative side of this type of backup is that the database is required to have transaction logging activated which will increase the size of the database and the effort required when doing the restore process.

8. Practice recovery operations

A successful company is flexible and quickly adapts to the changes in the market. In such a case, where business requirements could change unexpectedly, this could mean that your backup strategies can become obsolete.

Testing your backup strategies on a frequent basis and covering different scenarios that might appear, scenarios that include both system and individual database restores will ensure that your backup plans will have the expected efficiency and will work at the time they are needed.

9. Regularly back up system databases

Even though backup strategies will save you from losing user data, it is incomplete without a backup plan for your SQL Server system databases, master, model and msdb databases.

These databases are essential because they contain system configuration along with SQL Server job information which has to be restored in case of a total system restore.

You should also keep a strict and frequent plan of backing up your system databases, preferably on a daily basis, if you’re frequently changing instances. Otherwise, for installations which are more stable, you can do this backup operation with less frequency.

If you have already considered taking a look at SQLBackupAndFTP for your backup process, I still have good news regarding this software.

As you can see above, it also offers you the possibility to backup, along with your user databases, the system databases just with one extra click. You still have the options to back these databases up with a schedule and to your favorite location, be it on the same disk or in a different location.

Conclusion

If you are to follow these best practices in your backup process you will eventually find an optimum and the most efficient combination of steps to manage your organization’s databases. Software is here to help us and make our lives easier, while making us more efficient. This is why, for some of the steps above I recommend using SQLBackupAndFTP, which in my opinion has the most complete and easy to use set of tools necessary to manage your databases.

Also, by incorporating any of the steps above in your backup practices you are going to improve your organization’s efficiency against data loss and speed up the recovery process of your data.

Reference: Pinal Dave (https://blog.sqlauthority.com)

2 Comments. Leave new

Very good article if it s ok for you I will reblog it and why not apply it to our production environment.

Thanks

Another set of options that should be considered is using Ola Hallengren’s award winning maintenance scripts found at: https://ola.hallengren.com/. They are free, loved far and wide and just plain WORK even with the various SQL Server backup softwares. Furthermore it doubles down by providing index/statistic maintenance jobs and database check processes.