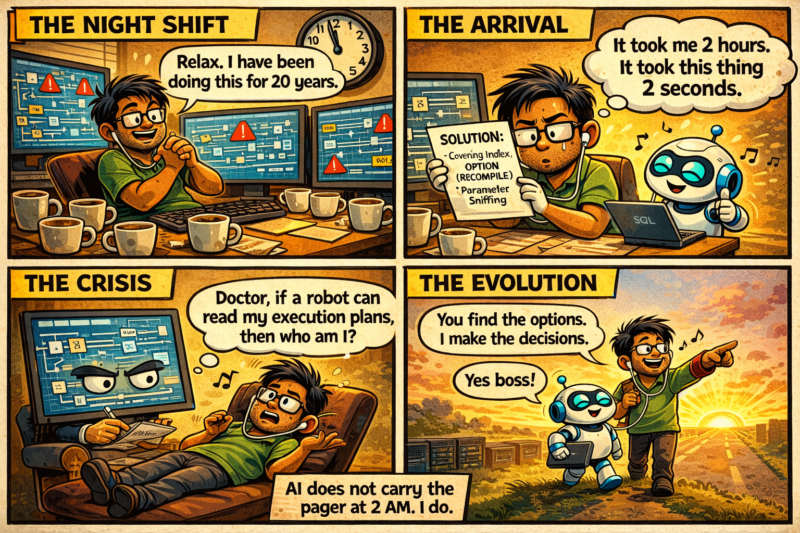

It was close to midnight and the system was not behaving the way it should. CPU was hovering around 85 percent, PAGEIOLATCH waits were climbing steadily, and one particular stored procedure had suddenly become the villain of the evening. I had the actual execution plan open. A Hash Match that clearly did not belong there. A Key Lookup that was blowing up row counts. An estimated versus actual row mismatch that was almost embarrassing to look at. Let us talk about The Strange Emotional Shift of Working Alongside a Machine That “Thinks”.

The Night a Query Finished Before I Did

I have seen this pattern before. Cardinality estimation gone wrong. Parameter sniffing, maybe. Or outdated statistics. This is the kind of puzzle I have solved for decades. You start with sys.dm_exec_query_stats. You check wait stats. You glance at missing index DMVs, but you do not trust them blindly. You think about workload patterns, concurrency, memory grant pressure. It is not just technical analysis. It is instinct, built from years of late-night production calls and early-morning postmortems.

Out of curiosity more than need, I pasted the query and some surrounding details into an AI assistant.

Within seconds, it responded with structured reasoning. It pointed out the Key Lookup and suggested a covering nonclustered index. It mentioned parameter sniffing and recommended OPTIMIZE FOR or OPTION (RECOMPILE). It even explained the row estimate mismatch in plain, simple language.

I stared at the screen.

Not because it was revolutionary. But because it was fast.

And in that speed, something inside me felt unsettled.

When Your Brain Has Always Been the Optimizer

For most of my career, I have joked that my brain is the real query optimizer. Before SQL Server decides on a plan, I have already predicted what it might choose. If a table has skewed data distribution, I can almost sense where the plan will break. If the memory grant is too generous, I anticipate spills to tempdb. If CXPACKET waits suddenly spike, I am already thinking about parallelism thresholds and cost threshold for parallelism before anyone opens sp_configure.

This ability did not come from reading documentation alone. It came from nights spent firefighting blocking chains. From watching deadlocks unfold in Profiler. From tuning queries where a missing index was not the answer because the entire data model needed to be rethought. That kind of thinking was never mechanical. It was deeply personal. It was the craft of performance tuning. Messy, hard-won, and irreplaceable.

So when a machine begins to replicate parts of that thinking, even partially, it touches something beyond convenience.

It makes you question whether what you believed was uniquely earned can now be simulated.

That is not an easy feeling to sit with.

The Execution Plan of the Ego

There is a quiet comparison that happens when you read AI-generated analysis of a complex SQL Server issue. You read it and instinctively measure it against your own thinking. You ask yourself, would I have explained the memory grant issue this way? Would I have pointed at the Nested Loops operator first? Sometimes you feel reassured because you spot the gaps. The AI suggests creating an index without considering write overhead on a busy OLTP system. It does not understand that this database runs 24×7 and cannot afford heavy index maintenance windows. It does not factor in the fragmentation that a wide nonclustered index will introduce on tables with high insert rates.

But sometimes, and this is the uncomfortable part, the explanation is clean. Logical. Structured. It reads like something you would confidently present in a performance review meeting.

And in that moment, your ego runs its own little execution plan. It calculates your value. It estimates your uniqueness. It checks its own cost model.

If clarity can be generated in seconds, what exactly is your edge now?

That question is not really about job security. It is about identity.

Information Is Not the Same as Judgment

AI can scan massive volumes of SQL Server documentation in an instant. It can explain the differences between READ COMMITTED SNAPSHOT and SERIALIZABLE isolation levels. It can talk about fragmentation thresholds, fill factor adjustments, statistics update strategies, and Query Store baselines. It can even walk through troubleshooting steps using DMVs in a way that sounds remarkably competent.

But it does not sit in the room when a wrong index decision causes write latency to climb across the entire OLTP workload. It does not remember the production outage three years ago where a well-intentioned index change triggered unexpected lock escalation during peak hours. It does not feel the weight of looking a business leader in the eye and telling them that their reporting query is fundamentally flawed, and that the fix is a redesign, not a hardware upgrade.

Experience changes how you think. Not just what you know, but how carefully you apply it.

When I recommend creating an index, I am not thinking about that one query alone. I am thinking about write overhead, maintenance windows, fragmentation behaviour, rebuild strategy, storage impact, and long-term scalability. That layered thinking comes from consequence. From having made decisions that went wrong and learning from them in real time, under real pressure.

Consequence cannot be simulated.

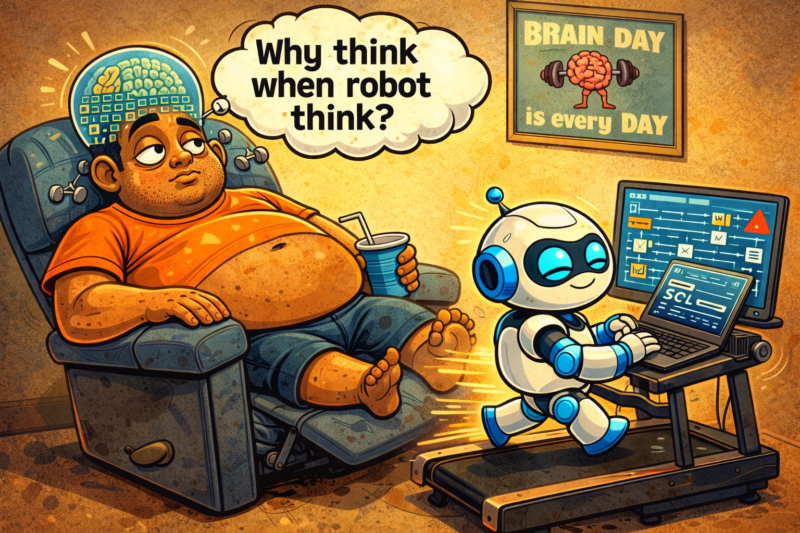

The Danger of Relying on Instant Answers

There is another uncomfortable truth here. AI reduces friction. And friction is exactly where expertise gets sharpened.

When I first learned performance tuning, I manually inspected execution plans operator by operator. I traced each arrow. I looked at estimated subtree cost and compared it against actual runtime behaviour. I learned, slowly and painfully, to correlate wait stats with workload patterns. That struggle built instinct. It trained my internal model of how SQL Server behaves under stress.

If I now rely entirely on AI-generated summaries to interpret execution plans, I will certainly save time. But will my internal model stay sharp? Will I still be able to diagnose a complex concurrency issue without outside help? Or will my brain slowly offload pattern recognition to an external system, the way muscles weaken when you stop using them?

This is not about rejecting AI. I am not arguing for that.

It is about protecting cognitive depth.

Because output can remain high while depth quietly decreases. And depth is what makes expertise resilient, especially in the moments that matter most.

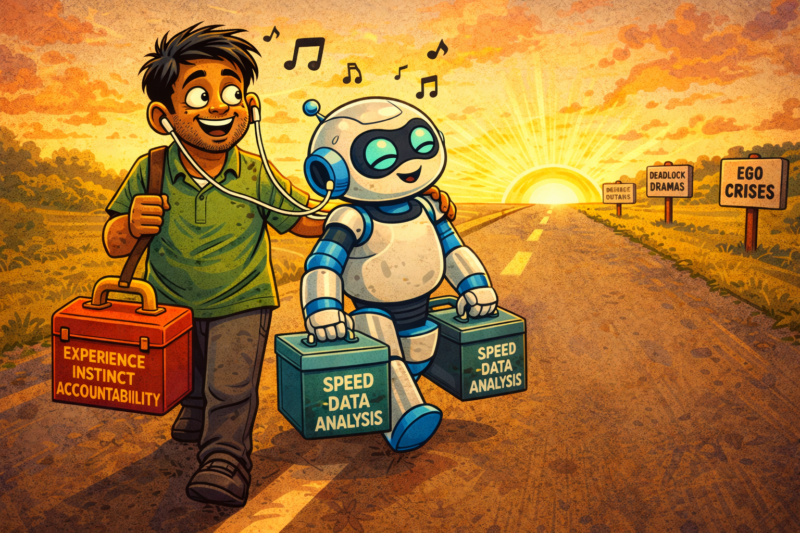

From Solver to Strategist

Over time, I have started to see this shift differently. AI is not replacing my thinking. It is changing my role.

Earlier, I was the primary solver. I was the one who found the answer. Now, I am increasingly the strategist. AI can suggest ten tuning options. It can propose query rewrites, index additions, MAXDOP adjustments, changes to cost threshold for parallelism. It can generate those recommendations quickly and coherently.

But I decide which lever to pull.

I decide whether a plan guide makes sense for this particular scenario. I decide whether forcing a plan through Query Store is safe given this workload’s volatility. I decide whether the right answer is a configuration change, an architectural redesign, or an honest conversation with the development team about how their code interacts with data.

Decision-making under uncertainty remains deeply human. And as the number of available options multiplies, judgement does not become less important. It becomes more critical.

The Real Evolution

The strange emotional shift of working alongside a machine that “thinks” is not dramatic or loud. It does not arrive in a single moment. It is gradual and deeply personal. It forces you to sit quietly with yourself and examine what part of your expertise is information and what part is wisdom.

When I look at a slow SQL Server today, I still open the execution plan manually. I still examine wait stats. I still think carefully about memory grants, parallelism, index design, and workload patterns. Those habits are not going anywhere.

But I also allow myself to collaborate. I use AI to pressure-test my assumptions, to explore alternate approaches I might not have considered, to draft structured explanations faster than I could on my own. And then I layer my experience on top of it.

The machine may suggest an index. But it does not carry the responsibility of implementing it in production at 2 AM when hundreds of users are active. It may produce reasoning about deadlocks or blocking chains. But it does not carry the memory of past incidents that make you cautious. The ones that taught you to pause before acting, even when you are confident.

It does not feel the pressure of accountability. That remains entirely human.

In the end, this shift is not really about machines becoming intelligent. It is about us becoming more intentional about how we think. If we use AI carelessly, it will slowly replace depth with convenience. If we use it consciously, it will amplify decades of hard-earned experience into something even more powerful.

The choice is not technical. It is psychological.

And that choice still belongs to us.

Reference: Pinal Dave (https://blog.sqlauthority.com), X (twitter).

3 Comments. Leave new

Very nicely written

This is a truly well‑structured post that clearly highlights the difference between how the human mind and a machine think and make decisions. When we talk about the superpower of AI, people often get carried away by its speed, analytical capability, and impressive recommendations.

But as humans, if we start accepting those suggestions blindly, that’s where the real decline of human reasoning begins. The accountability and impact of any decision ultimately rest with us, because we are the final decision-makers.

AI should absolutely be used for support, insights, and suggestions — but as you rightly said, we must take the final call with a complete 360‑degree understanding of the situation.

Thank you again for sharing this insightful and impactful article. It genuinely made my day.

Exactly! Its complementing. Not competing. We know our business well, AI is our helping hand.