[Notes from Pinal]: Lots of people think that SSIS is all about arranging various operations together in one logical flow. Well, the understanding is absolutely correct, but the implementation of the same is not as easy as it seems. Similarly most of the people think lookup component is just component which does look up for additional information and does not pay much attention to it. Due to the same reason they do not pay attention to the same and eventually get very bad performance.

Linchpin People are database coaches and wellness experts for a data driven world. In this 28th episode of the Notes from the Fields series database expert Tim Mitchell (partner at Linchpin People) shares very interesting conversation related to how to write a good lookup component with Cache Mode.

Linchpin People are database coaches and wellness experts for a data driven world. In this 28th episode of the Notes from the Fields series database expert Tim Mitchell (partner at Linchpin People) shares very interesting conversation related to how to write a good lookup component with Cache Mode.

In SQL Server Integration Services, the lookup component is one of the most frequently used tools for data validation and completion. The lookup component is provided as a means to virtually join one set of data to another to validate and/or retrieve missing values. Properly configured, it is reliable and reasonably fast.

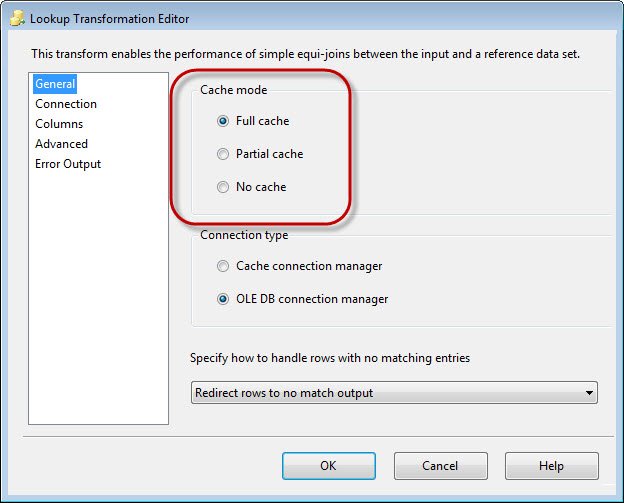

Among the many settings available on the lookup component, one of the most critical is the cache mode. This selection will determine whether and how the distinct lookup values are cached during package execution. It is critical to know how cache modes affect the result of the lookup and the performance of the package, as choosing the wrong setting can lead to poorly performing packages, and in some cases, incorrect results.

Full Cache

The full cache mode setting is the default cache mode selection in the SSIS lookup transformation. Like the name implies, full cache mode will cause the lookup transformation to retrieve and store in SSIS cache the entire set of data from the specified lookup location. As a result, the data flow in which the lookup transformation resides will not start processing any data buffers until all of the rows from the lookup query have been cached in SSIS.

The most commonly used cache mode is the full cache setting, and for good reason. The full cache setting has the most practical applications, and should be considered the go-to cache setting when dealing with an untested set of data. With a moderately sized set of reference data, a lookup transformation using full cache mode usually performs well. Full cache mode does not require multiple round trips to the database, since the entire reference result set is cached prior to data flow execution.

There are a few potential gotchas to be aware of when using full cache mode. First, you can see some performance issues – memory pressure in particular – when using full cache mode against large sets of reference data. If the table you use for the lookup is very large (either deep or wide, or perhaps both), there’s going to be a performance cost associated with retrieving and caching all of that data. Also, keep in mind that when doing a lookup on character data, full cache mode will always do a case-sensitive (and in some cases, space-sensitive) string comparison even if your database is set to a case-insensitive collation. This is because the in-memory lookup uses a .NET string comparison (which is case- and space-sensitive) as opposed to a database string comparison (which may be case sensitive, depending on collation). There’s a relatively easy workaround in which you can use the UPPER() or LOWER() function in the pipeline data and the reference data to ensure that case differences do not impact the success of your lookup operation. Again, neither of these present a reason to avoid full cache mode, but should be used to determine whether full cache mode should be used in a given situation.

Full cache mode is ideally useful when one or all of the following conditions exist:

- The size of the reference data set is small to moderately sized

- The size of the pipeline data set (the data you are comparing to the lookup table) is large, is unknown at design time, or is unpredictable

- Each distinct key value(s) in the pipeline data set is expected to be found multiple times in that set of data

Partial Cache

When using the partial cache setting, lookup values will still be cached, but only as each distinct value is encountered in the data flow. Initially, each distinct value will be retrieved individually from the specified source, and then cached. To be clear, this is a row-by-row lookup for each distinct key value(s).

This is a less frequently used cache setting because it addresses a narrower set of scenarios. Because each distinct key value(s) combination requires a relational round trip to the lookup source, performance can be an issue, especially with a large pipeline data set to be compared to the lookup data set. If you have, for example, a million records from your pipeline data source, you have the potential for doing a million lookup queries against your lookup data source (depending on the number of distinct values in the key column(s)). Therefore, one has to be keenly aware of the expected row count and value distribution of the pipeline data to safely use partial cache mode.

Using partial cache mode is ideally suited for the conditions below:

- The size of the data in the pipeline (more specifically, the number of distinct key column) is relatively small

- The size of the lookup data is too large to effectively store in cache

- The lookup source is well indexed to allow for fast retrieval of row-by-row values

No Cache

As you might guess, selecting no cache mode will not add any values to the lookup cache in SSIS. As a result, every single row in the pipeline data set will require a query against the lookup source. Since no data is cached, it is possible to save a small amount of overhead in SSIS memory in cases where key values are not reused. In the real world, I don’t see a lot of use of the no cache setting, but I can imagine some edge cases where it might be useful.

As such, it’s critical to know your data before choosing this option. Obviously, performance will be an issue with anything other than small sets of data, as the no cache setting requires row-by-row processing of all of the data in the pipeline.

I would recommend considering the no cache mode only when all of the below conditions are true:

- The reference data set is too large to reasonably be loaded into SSIS memory

- The pipeline data set is small and is not expected to grow

- There are expected to be very few or no duplicates of the key values(s) in the pipeline data set (i.e., there would be no benefit from caching these values)

Conclusion

The cache mode, an often-overlooked setting on the SSIS lookup component, represents an important design decision in your SSIS data flow. Choosing the right lookup cache mode directly impacts the fidelity of your results and the performance of package execution. Know how this selection impacts your ETL loads, and you’ll end up with more reliable, faster packages.

If you want me to take a look at your server and its settings, or if your server is facing any issue we can Fix Your SQL Server.

Reference: Pinal Dave (https://blog.sqlauthority.com)

3 Comments. Leave new

You don’t mention another very important reason for using No Cache. If the process is updating the data set used in the lookup, you do not want to cache, either full or partial. Indeed, caching would cause errors because the cached values would be missing any updates the process has done.

I have a small doubt. I want to load the the target table in case if the record is not found. so that here my lookup table and target table are one and the same. Now in the pipe line there are two duplicate records and they are no where in target. in case of partial cache or no cache,when the first record comes there is no matching record in cache so hit the db and searches for the value in lookup table(i.e target here) . Not found so inserted into target. Now the case when the same record comes, no match in the cache so need to hit the db. But now we have the matched record in the lookup/target. so no need to load. But it loads the second duplicate records too..

Please explain the processs..

thanks in advance..

During lookup transformation process: which data will be stored in cache–Pipeline data or Reference data..