Probably, everyone who writes SQL code has used INSERT statement, which alongside SELECT, UPDATE and DELETE, is a part of a basic operations’ set for data manipulation.

At the first sight syntax of the INSERT statement may seem very trivial , especially when inserting one record – INSERT INTO … VALUES … .

It’s not surprising, whereas in SQL Server 2005 basic syntax the VALUES keyword was applied only in the context of inserting records through using the INSERT statement. However, after the release of SQL Server 2008 the basic syntax of T-SQL was considerably expanded. Owing to this, usage of the multiline VALUES statement became possible not only for record insertion.

This article will focus on several possible cases of the VALUES statement usage. To present an unbiased assessment of the advantages of the VALUES statement, we will use built-in Profiler of dbForge Studio for SQL Server to look at the query plan for each particular example.

We have a table with the following structure, and we need to find the maximum amount of medals (disregarding their value) for each player:

IF OBJECT_ID ('dbo.Players') IS NOT NULL

DROP TABLE dbo.Players;

CREATE TABLE dbo.Players

(

PlayerID INT

, GoldBadge SMALLINT

, SilverBadge SMALLINT

, BronzeBadge SMALLINT

);

INSERT INTO dbo.Players (PlayerID, GoldBadge, SilverBadge, BronzeBadge)

VALUES

(1, 5, 4, 0),

(2, 0, 9, 1),

(3, 2, 4, 10);

To accomplish the task we need to convert columns into rows, so that afterwards we are able to apply the MAX aggregation function.

At first, let’s look at the example that is very common among beginners:

SELECT

t.PlayerID

, MaxBadgeCount = MAX(t.Badge)

FROM (

SELECT PlayerID, Badge = GoldBadge

FROM dbo.Players

UNION ALL

SELECT PlayerID, SilverBadge

FROM dbo.Players

UNION ALL

SELECT PlayerID, BronzeBadge

FROM dbo.Players

) t

GROUP BY t.PlayerID

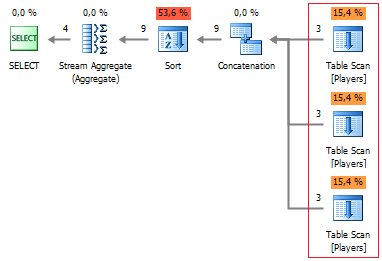

Note, instead of reading data once, we are reading it three times from the table .

Starting from SQL Server 2005, the UNPIVOT operator is generally used to convert columns into rows:

SELECT

t.PlayerID

, MaxBadgeCount = MAX(t.BadgeCount)

FROM (

SELECT *

FROM dbo.Players

UNPIVOT (

BadgeCount FOR Badge IN (

GoldBadge

, SilverBadge

, BronzeBadge

)

) unpvt

) t

GROUP BY t.PlayerID

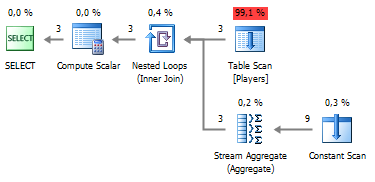

Let’s look at the plan:

Now data from the table is read only once and that is what we’ve been trying to achieve. However, there is still one more bottleneck left in our query (the most resource-consuming operation) – it’s the Sort operation which allows a server to detect the element with maximal value.

Let’s try to avoid row sorting using the VALUES statement.

SELECT

p.PlayerID

, MaxBadgeCount = (

SELECT MAX(BadgeCount)

FROM (

VALUES

(p.GoldBadge)

, (p.SilverBadge)

, (p.BronzeBadge)

) t (BadgeCount)

)

FROM dbo.Players p

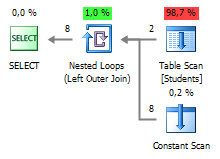

As we can see, sorting is not applied here.

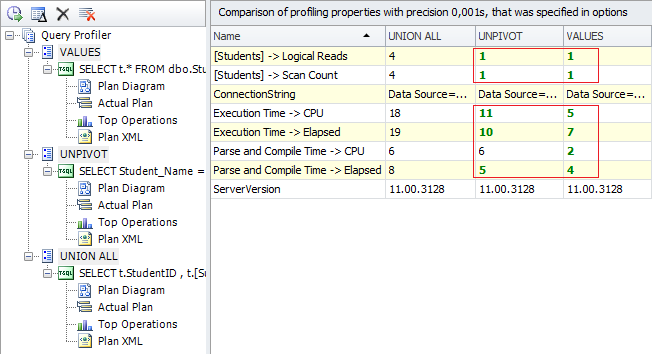

Let’s compare our queries and the results they return

Let’s examine the next sample, where we need to format row-wise data output in the grouped table.

IF OBJECT_ID ('dbo.Students') IS NOT NULL

DROP TABLE dbo.Students;

CREATE TABLE dbo.Students

(

StudentID INT

, Subject1 SMALLINT

, Subject2 SMALLINT

, Subject3 SMALLINT

, Subject4 SMALLINT

, Total SMALLINT

);

INSERT INTO dbo.Students (StudentID, Subject1, Subject2, Subject3, Subject4, Total)

VALUES

(1, 85, 94, 78, 90, 347), (2, 75, 88, 91, 78, 332)

We will get the following result:

We can solve this task by reading the data several times using UNION ALL with sorting:

SELECT

t.StudentID

, t.[Subject]

, t.Total

FROM (

SELECT ID = StudentID, StudentID, [Subject] = Subject1, Total, rn = 1

FROM dbo.Students

UNION ALL

SELECT StudentID, NULL, Subject2, NULL, 2

FROM dbo.Students

UNION ALL

SELECT StudentID, NULL, Subject3, NULL, 3

FROM dbo.Students

UNION ALL

SELECT StudentID, NULL, Subject4, NULL, 4

FROM dbo.Students

) t

ORDER BY t.ID, t.rn

We can also go back to the UNPIVOT statement with checking line numbers:

SELECT

Student_Name = CASE WHEN rn = 1 THEN t.StudentID END

, t.[Subject]

, Total = CASE WHEN rn = 1 THEN t.Total END

FROM (

SELECT

StudentID

, [Subject]

, Total

, rn = ROW_NUMBER() OVER (PARTITION BY StudentID ORDER BY 1/0)

FROM dbo.Students s

UNPIVOT (

[Subject] FOR tt IN (

Subject1,

Subject2,

Subject3,

Subject4

)

) unpvt

) t

However, the VALUES statement allows creating a more sophisticated query:

SELECT t.*

FROM dbo.Students

OUTER APPLY (

VALUES

(StudentID, Subject1, Total)

, (NULL, Subject2, NULL)

, (NULL, Subject3, NULL)

, (NULL, Subject4, NULL)

) t (Student_Name, [Subject], Total)

Let’s take a look at the plan and compare the results:

The VALUES statement cannot be considered a full substitute to UNPIVOT statement as well as a savior in other cases. It all goes down to the query plan, and analyzing it with the help of Profiler, available in dbForge Studio for SQL Server, allows detecting potential bottlenecks in query productivity.

Reference: Pinal Dave (https://blog.sqlauthority.com)

4 Comments. Leave new

It seems you’re missing the SQL being executed… I can see the plan, but I don’t see the SQL that the plan is for…

Where are the SQL queries ??

It seems everything is correct for now. Thanks a lot for the article!

Really cool examples. Thanks for the post.