Answer simple quiz at the end of the blog post and –

Every day one winner from India will get Joes 2 Pros Volume 3.

Every day one winner from United States will get Joes 2 Pros Volume 3.

Real Life Statistics

We are not surprised to see warm ski jackets appearing on display shelves starting in September. It’s not yet cold, but we know that winter time is a few months away based on our own recollection of the weather, which we’ve observed in previous seasons and prior years. Our own memory of temperature and weather patterns is a knowledge store we informally draw upon when planning for steps we will take before the cold weather arrives (e.g., pull your boots, mittens, scarves, and heavy jacket out of storage after Halloween; winterize your vehicle prior to November by flushing the car radiator and checking the condition of your snow tires; if it snows before U.S. Thanksgiving (fourth Thursday of November), then you know it likely will be a harsh winter; etc.). By sampling existing data, we can make reasonable decisions about things which have not yet happened.

This is precisely what SQL Server does when it comes to statistics. Similar to how we might step outdoors to sample the temperature, SQL Server observes your data to understand how selective certain values are within a field. With these statistics collected, SQL Server’s query optimizer can make good seek and scan decisions on fields with covering indexes.

SQL Statistics

SQL Server looks at the data in its tables long before you run your first SELECT statement. Because it’s already done this pre checking, SQL Server knows how to best run a query when the time comes. Sampling of this data is stored in statistics, so the query optimizer can make the right decisions.

Sampling to save time is something we do in our daily lives. How long do you think it will take you to drive to work in the morning? You already have a good estimate, because you have done this many times. We frequently need to predict how long it will take to do some combination of errands we have never done before. Suppose, for example, you have your first appointment with a new dentist in the morning and need to pick up your dog from the poodle parlor afterwards. Based on your sampling of the general area, your knowledge of traffic patterns in the area, the weather, and the time of day, you estimate how long it will take and the best way to go. SQL Server takes data samples, as a fraction of the real data in a table, so the query optimizer can decide the best way to run a query. These small samplings of data from a table are known as Statistics.

Shown here are the first 12 records of the SalesInvoiceDetail table. By visually inspecting this figure, we can sample our way to a few conclusions. The values in the InvoiceDetailID field isunique, which means those values in that field are highly selective. The InvoiceID field looks fairly selective with just 5 distinct values. The values in Quantity repead many times appear less selective. With this very small amount of data, it’s hard to tell for certain. We probably should sample more data to know which fields are selective enough to benefit from an index seek. Statistics are used by the Query Optimizer to know how selective certain query results will be based on their criteria.

If there were millions of records in the table and you had just five records with an InvoiceID of 1, that’s highly selective. If that query has a covering index on that type of selective field then it will benefit by doing a seek operation. But in order to know exactly how selective the values are within each field, SQL Server would first have to scan the entire table of millions of records. Scanning your table then generating statistics would be slower than just scanning the table when the query is run. Because of the statistics which SQL Server has gathered, the query optimizer knows how selective each value in each field is without doing an entire scan of the table at query time.

Sometimes, statistics are gathered by scanning the entire table and sometimes, statistics are gathered by scanning a sample of a table. Right now, the JProCo database reflects a retail operation with small scale customers whose invoices contain just a few products, at most. If we later shifted to a wholesale operation, where we had invoices with thousands of products on each bulk order, then you could have the same InvoiceID value repeated for each line item of a single order. Today our table shows that InvoiceID is a selective field; however it could slowly become less and less selective as time goes on and we start doing bulk sales. Statistics need to constantly be updated to know the most recent selectivity information for an index. If a field does not have an index, then there is no urgent need to retain statistics: any query which predicates on the field will always use a scan instead of a seek.

The bigger the table, the more statistics there will be for each index on the table. To easily see how the statistics are created for a table, let’s choose a small table in JProCo. The HumanResources.RoomChart table has just eight records. Looking closer at these records, we see an ID which is very selective – in fact, this ID is unique. We have a field called [Code] which is also unique. The field RoomName is unique. None of these first three fields contains a duplicate value: each field has 8 different values. The NULL value is duplicated several times in the RoomNotes field and RoomDescription fields.

Statistics Metadata

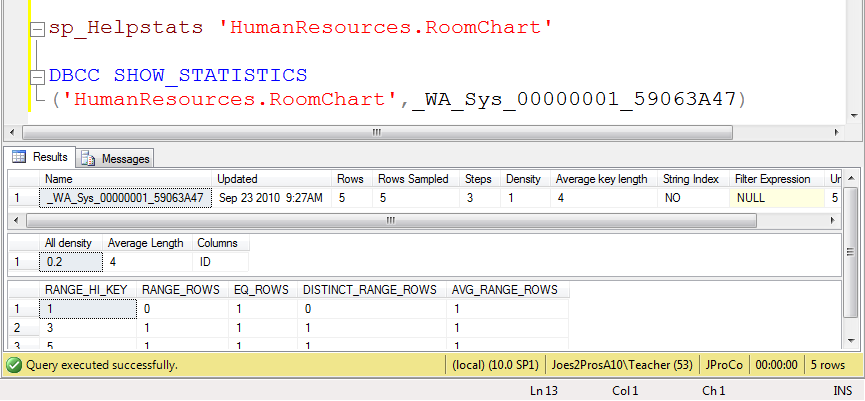

SQL Server has a handy system stored procedure (“sproc”) for showing the available statistics for a table. Let’s run sp_Helpstats to see some statistics for the HumanResources.RoomChart table.

Notice this statistic (_WA_Sys_00000001_59063A47) is on the first field of the HumanResources.RoomChart table. As well, it is the only statistic currently being tracked for this table – SQL Server isn’t tracking the other fields with statistics. None of the fields have an index on this table.

Histogram

To look at the statistics details of the HumanResources.RoomChart table, use the DBCC SHOW_STATISTICS. You will need to supply the table name and the name of the statistic. Pass in each value as a parameter. Keep in mind our table has ID values 1 through 8, all of which are unique.

In the second result set we see the ID column has four bytes, because the ID field of the HumanResources.RoomChart is an integer. The bottom result set is known as a histogram which shows you the spread of values for a field, and how many values are repeated for that field. For example, ID 1 appears once. ID 3 is listed once, as is ID 5. Notice it didn’t list all of the IDs; we see just a sampling.

In this histogram you see records with a small number of rows for each value. In fact there is only 1 EQ_ROWS count for each value, which signifies that this data is selective. However, if you see very few values with a high EQ_ROWS count, this indicates low selectivity. Note for each ID, the count of EQ_ROWS in this histogram is 1.

Updating Statistics

As new data gets added to a table the histogram record counts can get out of date. SQL Server already updates statistics as necessary to keep the histogram up to date with the data in the table that it represents. The auto-update statistics option is less than perfect, so you have the option of updating it at the time of your choosing. One way to get better statistics is to manually update the statistics for the table using the UPDATE STATISTICS command. The example below would update all the statistics for the Customer table:

UPDATE STATISTICS Customer

Note: If you want to setup the sample JProCo database on your system you can watch this video. For this post you will want to run the SQLArchChapter12.0Setup.sql script from Volume 3.

Question 21

What advantage do SQL statistics offer the query optimizer?

- They allow the table to save space.

- The query optimizer knows the selectivity level of values before a query is run.

- They show performance statistics history since the last SQL restart.

- They prevent page splits by buffer data page memory for later.

Rules:

Please leave your answer in comment section below with correct option, explanation and your country of resident.

Every day one winner will be announced from United States.

Every day one winner will be announced from India.

A valid answer must contain country of residence of answerer.

Please check my facebook page for winners name and correct answer.

Winner from United States will get Joes 2 Pros Volume 3.

Winner from India will get Joes 2 Pros Volume 3.

The contest is open till next blog post shows up at which is next day GTM+2.5.

Reference: Pinal Dave (https://blog.sqlauthority.com)

49 Comments. Leave new

Answer is:

The query optimizer knows the selectivity level of values before a query is run.

Vinay

Pune, India

Answer is:

2. The query optimizer knows the selectivity level of values before a query is run.

Vinay

Pune, India

Ans : Option 2

2.The query optimizer knows the selectivity level of values before a query is run.

Rajesh

From India

Answer 2

2.The query optimizer knows the selectivity level of values before a query is run.

Country : India

Hi Sir,

The correct answer is option no 2.

The query optimizer knows the selectivity level of values before a query is run.

Because of the statistics which SQL Server gather

the query optimizer knows how selective each value in each field is without doing an entire scan of the table at query time.

P.Anish Shenoy,

INDIA, Bangalore, Karnataka

How do you find out port id and ip address in sql server 2008

The correct option is #2

The query optimizer knows the selectivity level of values before a query is run.

Sampling is done to generate statistics on a table so that the query optimizer knows the best way to execute the query using statistics.

Country of Residence: USA